The initial phase of any comprehensive SEO strategy involves enhancing your technical SEO in the UK. It’s crucial to make sure that your website is in excellent condition to boost the flow of natural traffic, improve keyword rankings, and increase conversions.

Regardless of the sector in which your brand or company operates in the UK, technical SEO principles have become increasingly significant. Back on June 16th, 2021, Google initiated the rollout of a significant core algorithm update known as “The Page Experience Update.” This update aims to enhance the user experience by giving preference to pages that deliver high-quality performance, such as fast loading times and a stable, non-shifting layout.

Google has historically considered certain page experience metrics, including mobile-friendliness, HTTPS security, and intrusive interstitials. Additionally, they’ve been emphasizing fast-loading pages since 2010. However, with the 2021 Page Experience update, Google introduces three new metrics designed to gauge both speed and the overall page experience, known as Core Web Vitals.

Our ultimate technical SEO checklist for businesses in the UK.

1. Enhance Your Page Experience with Core Web Vitals Assessment

Google has introduced a fresh set of criteria for page experience, merging Core Web Vitals with their existing search signals since 2015. These encompass

- Mobile-friendliness (February 26, 2015)

- More Safe Browsing Help for Webmasters (September 07, 2016)

- HTTPS security adoption (November 04, 2016)

- Guidelines against easily accessing content on mobile (August 23, 2016)

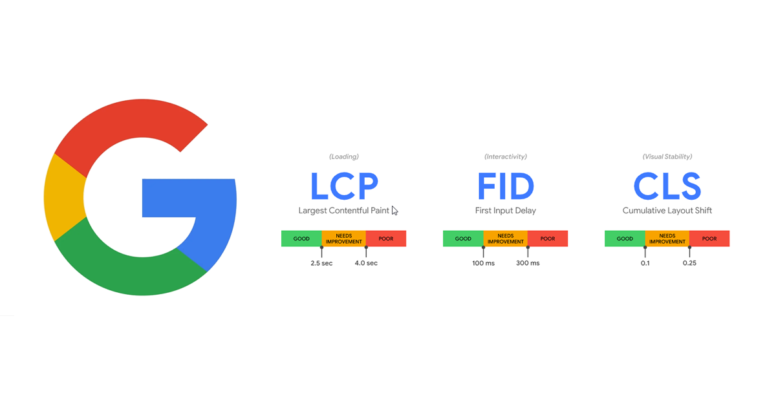

In case you need a quick reminder from my side, Google’s Core Web Vitals consist of three key factors:

- Largest Contentful Paint (LCP): LCP gauges the loading performance of the most substantial content element on a page, striving for a loading time of 2.5 seconds or less for an optimal user experience.

- First Input Delay (FID): FID measures the time it takes for a user to interact with a webpage. To ensure a positive user experience, a page should aim for an FID of under 100 milliseconds.

- Cumulative Layout Shift (CLS): CLS evaluates the stability of on-screen elements. Websites should target a CLS of less than 0.1 seconds for a seamless user experience.

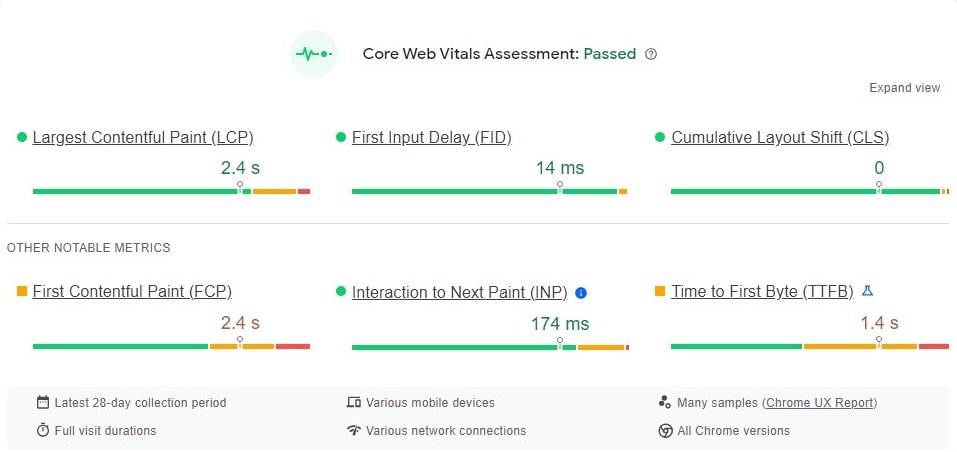

There are three more metrics as a scope of improvements in CWVs.

- First Contentful Paint (FCP): The First Contentful Paint (FCP) metric gauges the duration from the commencement of page loading until the first appearance of any content, which includes text, images (including background images), elements, or non-white <canvas> elements, on the screen.

- Interaction to Next Paint (INP): Get ready for a new web performance metric called Interaction to Next Paint (INP), set to replace First Input Delay (FID) in March 2024. INP focuses on how responsive a website is, using information from the Event Timing API. If a website becomes unresponsive when you try to interact with it, that’s a frustrating user experience. INP looks at the delay for all the interactions you make on a page and provides a single value that most interactions fall below. A low INP indicates that the page consistently responds swiftly to most or all of your actions, ensuring a smoother user experience.

- Time to First Byte (TTFB): Time to First Byte (TTFB) is an essential metric that tells us how quickly a web server responds when you try to access a website. It’s a crucial indicator of whether a server is quick or sluggish in answering your requests. When you’re navigating to a webpage, like opening an HTML page, TTFB is the first thing that happens before all the other loading stuff kicks in

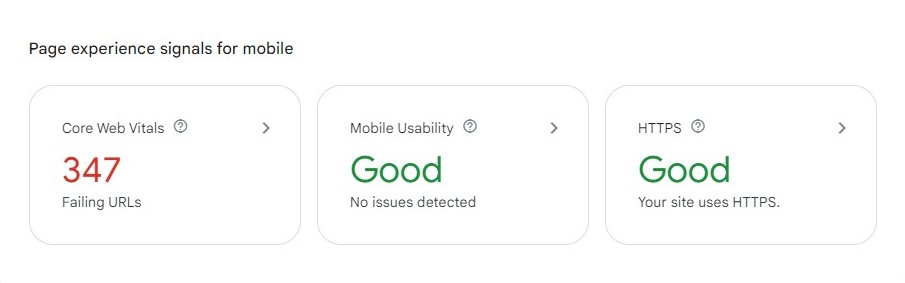

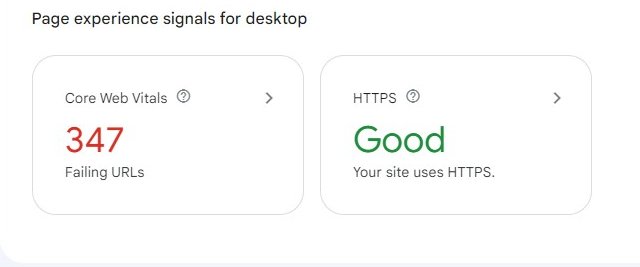

These ranking factors can be assessed through a report available in Google Search Console, offering insights into URLs with potential issues. The Page Experience Report in Google Search Console graphically displays mobile & desktop pages categorized by their experience, distinguishing between those in need of improvement and those offering a good user experience.

Numerous free and paid tools can assist you in boosting your website’s speed and Core Web Vitals, with Google PageSpeed Insights being a primary resource. Additionally, Webpagetest.org is a valuable platform for assessing the speed of different pages on your site across diverse locations, operating systems, and devices.

To enhance your website’s speed, consider implementing the following optimizations:

- Optimize Images Using modern image formats like WebP or AVIF and lazy loading to improve loading times.

- Minimize HTTP Requests, Enable Browser Caching and minify CSS and JavaScript.

- Leverage Browser Rendering, Enable GZIP Compression and Reduce Server Response Time.

- Create a Responsive Web Design, Reduce 301 and 302 redirects.

- Prioritize Above-the-Fold Content, Server-Side Caching & Optimize Database Queries

2. Check your website for crawl errors:

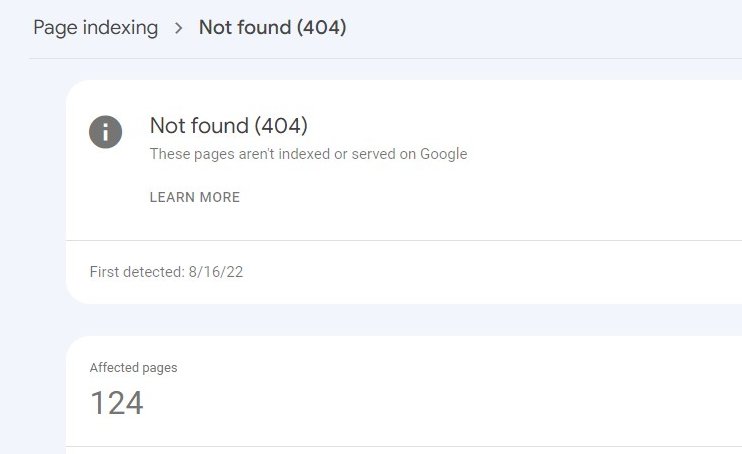

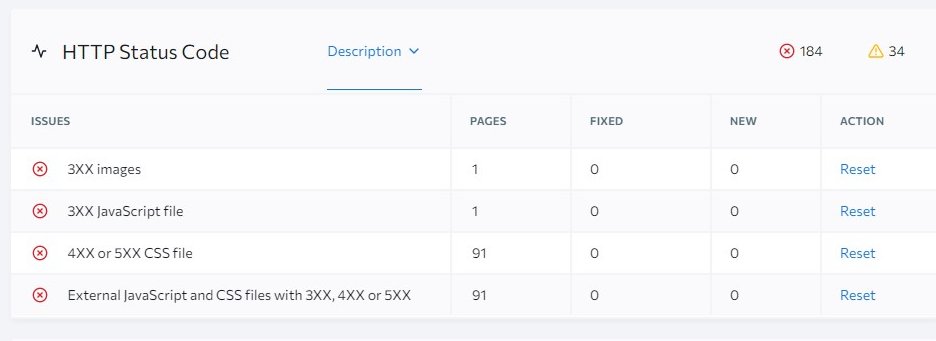

Next, it’s really essential to ensure that your website is free from any crawl errors. Crawl errors happen when a search engine attempts to access a page on your website but encounters difficulties. It will increase your website indexing issues.

You can employ tools like SERanking or various online SEO tools – there are numerous options available to assist you in this task. After you’ve conducted an audit of your website, examine it for any crawl errors. You can also verify this using Google Search Console.

When searching for crawl errors, it’s important to…

- Ensure that all redirects are correctly implemented with 301 redirects.

- Investigate any 4xx and 5xx error pages to determine where you want to redirect them.

Must Remember: To take your redirect efforts a step further, be vigilant for instances of redirect chains or loops, where URLs redirect to another URL multiple times, which can increase loading speed.

3. Enhancing User Experience and SEO: Repairing Broken Internal and Outbound Links

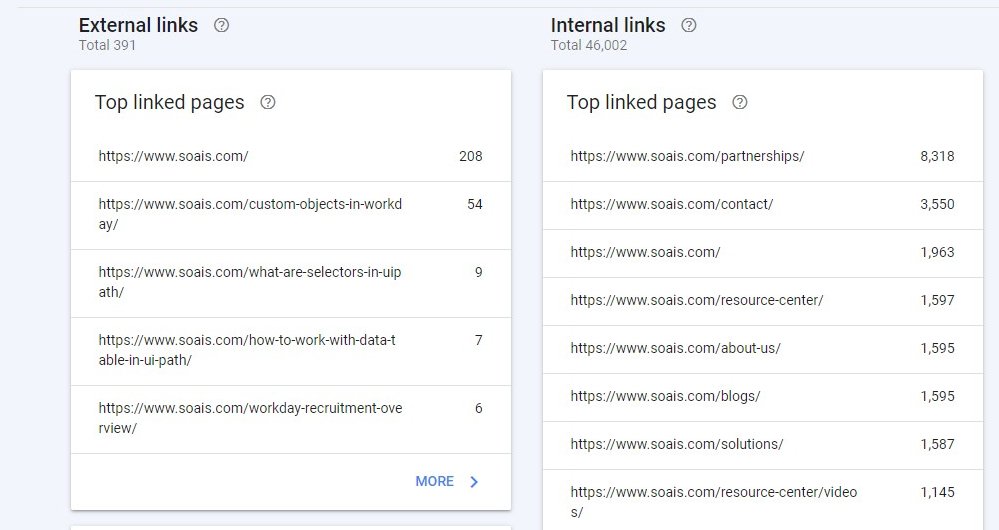

A well-structured and functional link system is vital for an optimal user experience and improved search engine performance. Few things are more frustrating for visitors than clicking on a link within your website, only to be greeted by a non-functional or misdirected 404 URL. You can read this The Most Common Internal Link Building Mistakes– A study by SEMrush. Also, you can check your external & internal links via Google Search Console.

To ensure a seamless browsing experience and better SEO for your website in the UK, it’s essential to address the following aspects:

- Redirect Issues: Identify links employing 301 or 302 redirects, as they can impact user experience and search engine rankings.

- Error Pages (4XX): Eliminate links leading to error pages (commonly known as 4XX errors) to maintain website credibility.

- Orphaned Pages: Locate and rectify pages that lack internal links, ensuring that valuable content is accessible to users.

- Deep Internal Linking: Simplify your internal linking structure, preventing content from becoming buried deep within the website.

- Using Descriptive and Relevant Anchor Text: In essence, Google expects all buttons and links on your website to be exceptionally clear about their intended user actions. In simpler terms, the era of using generic labels like “Learn More or “Click Here” for buttons is now over.

To rectify these issues, you should promptly update target URLs or, when necessary, remove non-existent links altogether. By addressing these concerns, you can significantly enhance both user satisfaction and your website’s performance in search engine results.

4. Optimize Your Website for Search Engines and Users: Eliminating Duplicate and Thin Content

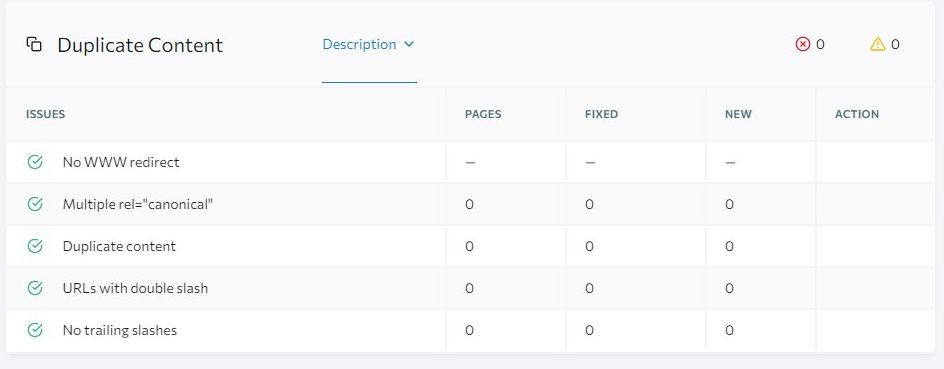

Ensure the quality of your website’s content by eliminating duplicate or low-quality material. Duplicate content can emerge from various sources, such as replicated pages resulting from faceted navigation, maintaining multiple site versions, or using scraped or copied information.

It is vital to allow search engines to index only one version of your website. For instance, search engines perceive the following domains as distinct sites rather than a unified one:

https://mindnmatter.co.uk/

https://www.mindnmatter.co.uk/

https://www.mindnmatter.co.uk/

https://mindnmatter.co.uk/

To resolve duplicate content issues, consider these approaches:

- 301 Redirects: Set up 301 redirects to the primary URL version. Here we preferred version is “https://mindnmatter.co.uk/” The other three should be redirected with a 301 status code to this version.

- No-Index or Canonical Tags: Implement no-index or canonical tags on duplicate pages, indicating to search engines the preferred source of content.

- Preferred Domain Configuration: Use Google Search Console to specify your preferred domain. This will help search engines understand which version to prioritize.

- Parameter Handling: Configure parameter handling settings in Google Search Console to guide search engines on how to treat URLs with varying parameters.

Whenever possible, remove any redundant content to enhance both user experience and search engine rankings.

5. Having a clean & simple URL structure is the best practice: Google

Complex URLs can pose issues for web crawlers by generating an excessive number of URLs that direct to identical or closely related content within your website.

Consequently, Googlebot might face challenges when attempting to fully index all the content on your site.

Here are some examples of problematic and non-readable URLs for Google:

non-ASCII characters in the URL:

https://www.example.com/🦙✨

Unreadable, long ID numbers in the URL:

https://www.example.com/index.php?id_sezione=360&sid=3a5ebc944f41daa6f849f730f1

To ensure that website URLs have a clean structure, follow these best practices:

1. Use Descriptive and Meaningful URLs:

Choose URLs that reflect the content of the page. Avoid generic or cryptic URLs.

Use keywords related to the content of the page in the URL to improve SEO.

Example: https://mindnmatter.co.uk/services/web-and-app-development/

2. Use Hyphens to Separate Words:

Use hyphens (-) to separate words in the URL, as search engines and users find them more readable and user-friendly than underscores or other characters.

Bad Example: https://www.example.com/summer_clothing/filter?color_profile=dark_grey

3. Keep URLs Short and Concise:

Shorter URLs are easier to remember and share. Avoid excessively long URLs that can confuse users.

4. Use Lowercase Letters:

Keep all characters in the URL in lowercase to maintain consistency and prevent case sensitivity issues.

5. Avoid Special Characters and Spaces:

Use only letters, numbers, hyphens, and a few special characters like slashes (/) and dots (.) in URLs. Avoid spaces and other special characters.

6. Remove Unnecessary Parameters:

If possible, eliminate unnecessary query parameters from your URLs. Cleaner URLs are easier to understand and share.

7. Implement a Logical Folder Structure:

Organize your website content into a logical folder structure, which helps users and search engines navigate your site more easily.

Example: https://www.example.com/search/noheaders?click=6EE2BF1AF6A3D705D5561B7C3564D9C2&clickPage=OPD+Product+Page&cat=79

8. Avoid URL Session IDs:

Avoid using session IDs in your URLs, as they can make URLs lengthy and less user-friendly.

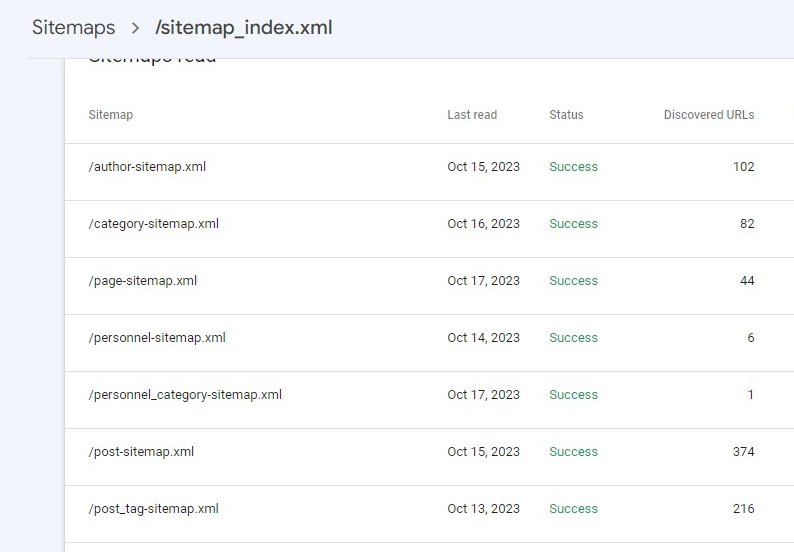

6. Make Sure Your Website Sports a Well-Optimized XML Sitemap

XML sitemaps play a crucial role in informing search engines about your website’s structure and what they should showcase in the Search Engine Results Pages (SERP).

An ideal XML sitemap should encompass the following elements:

- Recent additions to your site, such as new blog posts, products, and more.

- Only URLs that return a 200 status code (indicating a successful request).

- No more than 50,000 URLs. If your website contains more URLs, consider creating multiple XML sitemaps. This will help make the most of your website’s crawl budget.

Exclude the following types of URLs from the XML sitemap:

- URLs with parameters

- URLs that undergo a 301 redirection or have canonical or no-index tags

- URLs with 4xx or 5xx status codes

- Duplicate content

To ensure the effectiveness of your XML sitemap, you can monitor it using the Index Coverage report within Google Search Console. This will help identify and rectify any potential issues with the indexing process.

7. You should have a well-optimized Robots.txt file

The robots.txt file, located in the root directory of your website, serves as a guide for search engine crawlers like Googlebot. It directs these crawlers on which pages or files to request from your site, preventing your website from being overwhelmed by excessive requests.

When search engines visit your website, their first task is to locate and review the contents of the robots.txt file. Based on the instructions within this file, they compile a list of URLs that they can explore and include in their index for your website.

Consider the following types of URLs that you should prevent from being accessed through your website’s robots.txt file:

- Temporary files: These are files that are only meant for temporary use and shouldn’t be made publicly accessible.

- Admin pages: Secure your website’s administrative pages by disallowing them in the robots.txt file.

- Cart & checkout pages: Keep sensitive shopping cart and checkout pages private by excluding them from search engine access.

- Search-related pages: Prevent search results and related pages from showing up in search engine results.

- URLs with parameters: Disallow URLs that contain various parameters, which may not need to be indexed by search engines.

Additionally, don’t ever forget to specify the location of your website’s XML sitemap within the robots.txt file. You can use Google’s robots.txt tester to ensure that your file is correctly configured and functioning as intended.

8. Use structured data or schema markup:

Yes, we do believe, structured data is still a valuable tool for enhancing your web content’s visibility and providing meaningful information to Google. This helps Google understand the content’s purpose, making your organic search listings more prominent on the Search Engine Results Pages (SERPs).

One widely used form of structured data is known as ‘schema markup.’

Schema markup offers various data structuring options, covering details about individuals, locations, organizations, local businesses, customer reviews, and more.

Creating schema markup for your website is made easier with online schema markup generators, and Google’s Structured Data Testing Tool is a helpful resource for this purpose.

Conclusions:

Even minor adjustments to your website can lead to fluctuations in its technical SEO health, potentially affecting its performance. When you modify internal or external links or alter anchor text within your site or linked sites, it may result in broken links.

Additionally, during processes such as launching new web pages, organizational changes, site migrations, or redesigns, crucial SEO elements like schema markup, sitemaps, and robots.txt files may not be correctly transferred or placed in a way that Google can recognize.

To safeguard your website’s organic traffic and performance, it’s vital to establish a proactive strategy. If you are going to hire an SEO Agency in Birmingham, Hertfordshire or somewhere in the UK or doing it your own. You have to regularly conduct site crawls and assess each aspect outlined in this checklist whenever significant website changes are implemented. By doing so, you can ensure that your technical SEO remains robust, preventing disruptions in organic website traffic.